How content-adaptive compression, accelerated by NVIDIA, addresses video data bottlenecks for physical AI pipelines, including autonomous vehicles, without compromising ML model performance

If you’re developing physical AI applications, you already know the problem: Massive datasets are required for training and validation of autonomous vehicles (AV), robotics, and smart spaces – accumulating to tens or hundreds of petabytes. The visual content and synthetic data autonomous machines need to perceive and sense the world around them is growing faster than your infrastructure can handle.

You likely realized that video data compression is essential for managing the escalating storage costs, tight transfer bandwidth, infrastructure limits and training requirements. Many professionals search for a validated approach for video data compression at scale. Some compromise on a conservative path, with lossless and near lossless methods that lower the risk of missing critical visual details but fail to manage the massive datasets.

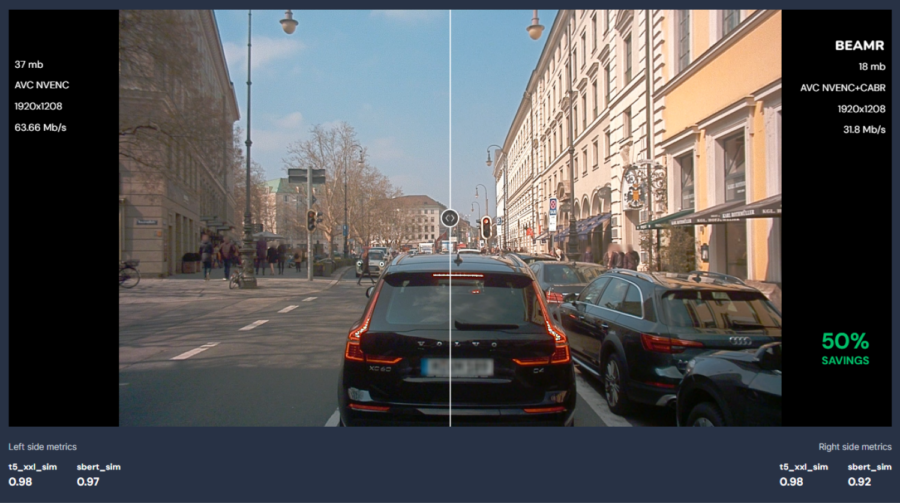

Beamr’s content-adaptive, NVIDIA accelerated approach delivers ML-safe video data compression while reducing file sizes by up to 50%. Beamr frees up critical storage, networking, and compute resources, and enables faster training and validation. A series of benchmark testings, including with NVIDIA Cosmos, validated Beamr’s approach for autonomous vehicles video data.

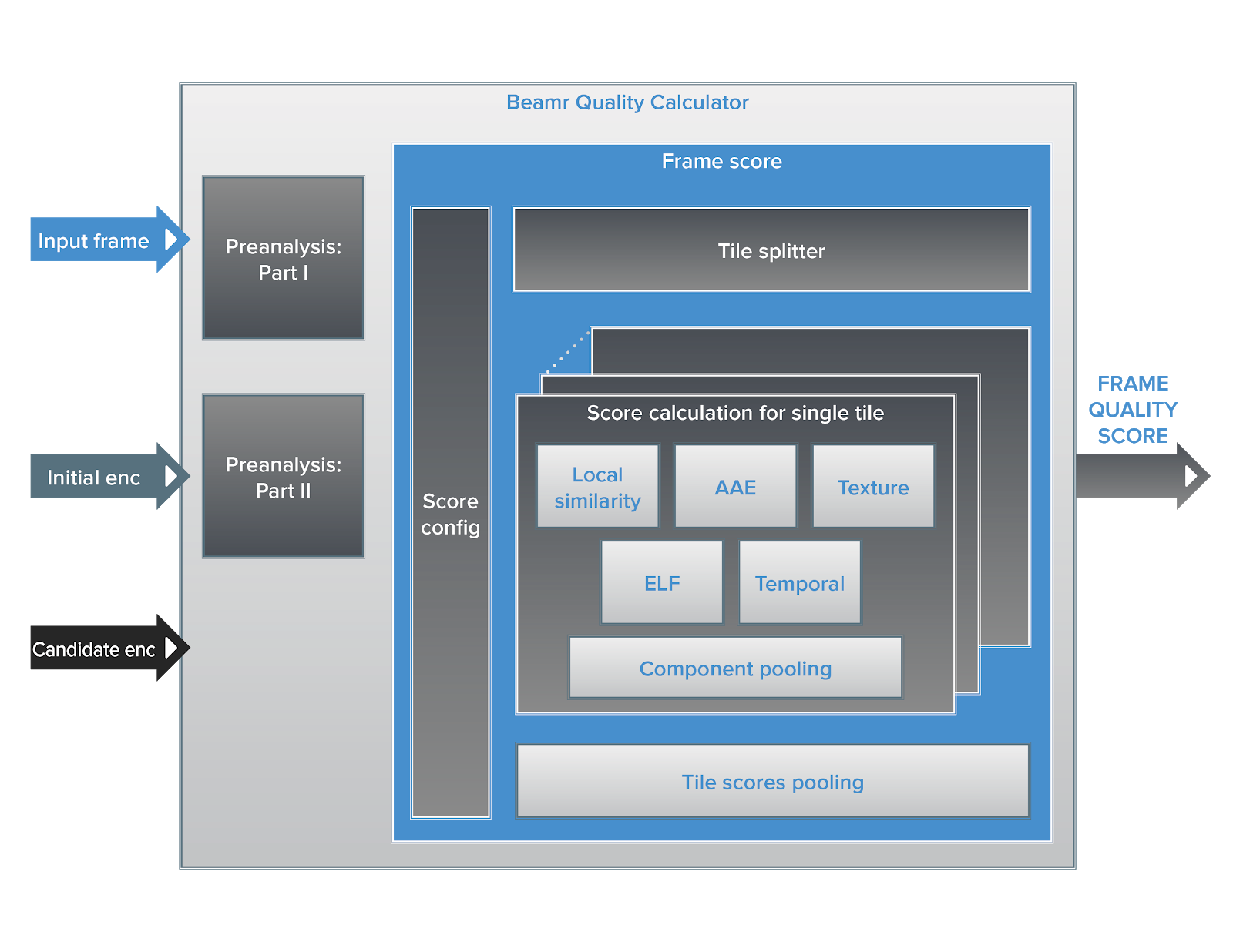

Object detection, classification, and prediction models depend on object boundaries, structural edges, and scene geometry – rather than the smooth gradients and color transitions that human viewers notice. Beamr’s Content-Adaptive Bitrate technology (CABR) addresses that by analyzing footage frame-by-frame, preserving the visual fidelity and cues that ML models rely on – unlike generic compression methods that result in very high data rates or degrade model performance.

———————–

Managing petabyte-scale video data? Meet us at GTC 2026 to discover the ML-safe compression that fits your workflow Click here

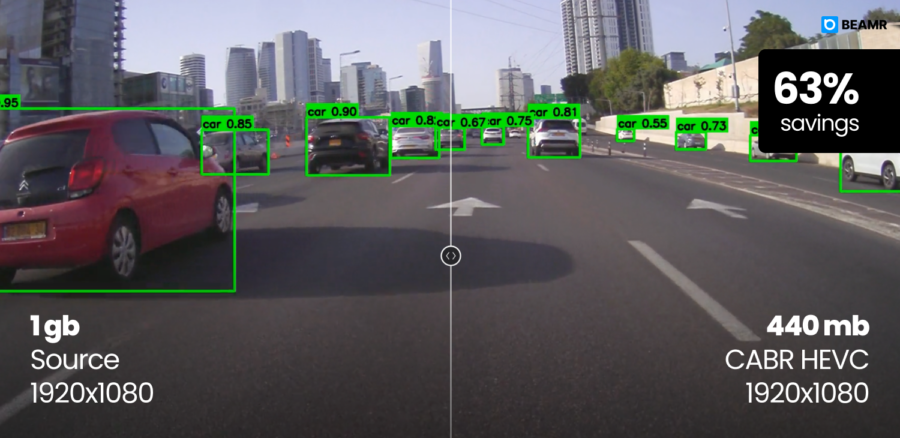

ML-safe video data compression with object detection

Preserving Visual Fidelity for Prediction and Reasoning

Beamr’s CABR is integrated into NVENC and available through NVIDIA AI infrastructure. If NVENC hardware is already in your pipeline, no additional compute infrastructure is required.

The CABR-NVENC system delivers validated compression at multiple points along the data pipeline:

In the cloud and data centers, petabyte-scale datasets can create bottlenecks through training and validation pipelines. The cost of storing those datasets, and the time required to move data through ML pipelines, grow with file size. Beamr’s compression optimizes both newly ingested footage and existing encoded archives through GPUs clusters, including NVIDIA L4 Tensor Core, NVIDIA L40, and NVIDIA L40S GPUs. CABR can be deployed as a fully managed service in the cloud, including AWS S3.

CABR-optimized workflow for NVIDIA Cosmos

NVIDIA Cosmos World foundation models are essential for physical AI development, generating visually, spatially, and physically accurate visual content at volumes that often surpass real-world datasets. For NVIDIA Cosmos, Beamr brings ML-aware compression, preserving the visual fidelity that prediction and reasoning models depend on.

We performed testing demonstrating that CABR-optimized workflow delivered 41%-57% improved compression, while introducing no measurable impact on the captioning pipeline beyond the model’s inherent stochastic variability. The testing across varied uncompressed AV video sources, from several well-known datasets, focused on captioning texts similarity via embedding distances and model perceptual quality of the Cosmos Curate AV pipeline outputs. The structured scene classification of Visibility, Road Conditions, and Illumination (VRI) discrete attributes agreement rates remained comparable to the compression baseline, and well within expected classification noise.

Validated solution for Autonomous Vehicles

Beamr’s approach was validated across multiple benchmark tests. Rigorous testing with the NVIDIA AV infrastructure team delivered 23% compression improvement over current working point using the same codec, with up to 40-50% reduction when switching to HEVC or AV1. The rigorous testing used real-world AV footage, including challenging scenarios with complex camera configurations and diverse environmental conditions.

In additional testing, focused on object detection, Beamr’s technology reduced file size by ~48%, while preserving <2% difference in mean Average Precision (mAP), well within the model’s expected variance. Testing with compressed footage of the NVIDIA physical AI AV dataset, confirmed that precision (mAP) remains consistently high, indicating that detections and classifications align closely; localization differences are minimal, confirming that bounding-box structure is preserved; and confidence scores remain highly correlated, demonstrating stable model behavior.

Building Confidence at Scale

CABR fits to your stack: You can integrate CABR directly into existing workflows through SDK or FFMPEG plugin, ingest any input and the output is in standard codecs – AVC, HEVC, AV1. For engineers looking to overcome infrastructure bottlenecks and achieve faster development cycles for autonomous vehicles, robotics and other physical AI applications, Beamr delivers a validated ML-safe, NVIDIA accelerated approach with significant improvements over uncompressed datasets or existing compression methods.

———————–

Managing petabyte-scale video data? Meet us at GTC 2026 to discover the ML-safe compression that fits your workflow Click here

———————–

Learn more in our ML-Safe AV Video Data Testing series:

Part 1: Beamr is Pushing the Boundaries of AV Data Efficiency, Accelerated by NVIDIA

Part 2: ML-Safe AV Video Data Processing Achieves Up to 50% Storage Reduction

Part 3: Deep Dive: Managing the Petabyte-Scale AV Video Data Bottlenecks